[ad_1]

Clever assistants on cellular gadgets have considerably superior language-based interactions for performing easy every day duties, comparable to setting a timer or turning on a flashlight. Regardless of the progress, these assistants nonetheless face limitations in supporting conversational interactions in cellular person interfaces (UIs), the place many person duties are carried out. For instance, they can’t reply a person’s query about particular data displayed on a display. An agent would wish to have a computational understanding of graphical person interfaces (GUIs) to realize such capabilities.

Prior analysis has investigated a number of necessary technical constructing blocks to allow conversational interplay with cellular UIs, together with summarizing a cellular display for customers to rapidly perceive its goal, mapping language directions to UI actions and modeling GUIs in order that they’re extra amenable for language-based interplay. Nevertheless, every of those solely addresses a restricted facet of conversational interplay and requires appreciable effort in curating large-scale datasets and coaching devoted fashions. Moreover, there’s a broad spectrum of conversational interactions that may happen on cellular UIs. Due to this fact, it’s crucial to develop a light-weight and generalizable method to comprehend conversational interplay.

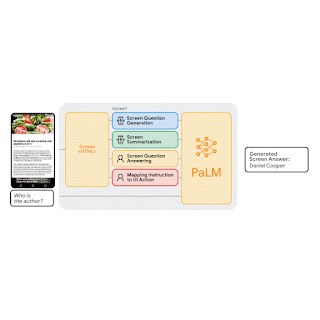

In “Enabling Conversational Interplay with Cellular UI utilizing Massive Language Fashions”, introduced at CHI 2023, we examine the viability of using giant language fashions (LLMs) to allow various language-based interactions with cellular UIs. Current pre-trained LLMs, comparable to PaLM, have demonstrated skills to adapt themselves to numerous downstream language duties when being prompted with a handful of examples of the goal activity. We current a set of prompting strategies that allow interplay designers and builders to rapidly prototype and take a look at novel language interactions with customers, which saves time and assets earlier than investing in devoted datasets and fashions. Since LLMs solely take textual content tokens as enter, we contribute a novel algorithm that generates the textual content illustration of cellular UIs. Our outcomes present that this method achieves aggressive efficiency utilizing solely two knowledge examples per activity. Extra broadly, we exhibit LLMs’ potential to essentially remodel the long run workflow of conversational interplay design.

|

| Animation exhibiting our work on enabling varied conversational interactions with cellular UI utilizing LLMs. |

Prompting LLMs with UIs

LLMs help in-context few-shot studying by way of prompting — as a substitute of fine-tuning or re-training fashions for every new activity, one can immediate an LLM with a number of enter and output knowledge exemplars from the goal activity. For a lot of pure language processing duties, comparable to question-answering or translation, few-shot prompting performs competitively with benchmark approaches that practice a mannequin particular to every activity. Nevertheless, language fashions can solely take textual content enter, whereas cellular UIs are multimodal, containing textual content, picture, and structural data of their view hierarchy knowledge (i.e., the structural knowledge containing detailed properties of UI parts) and screenshots. Furthermore, straight inputting the view hierarchy knowledge of a cellular display into LLMs shouldn’t be possible because it comprises extreme data, comparable to detailed properties of every UI ingredient, which might exceed the enter size limits of LLMs.

To handle these challenges, we developed a set of strategies to immediate LLMs with cellular UIs. We contribute an algorithm that generates the textual content illustration of cellular UIs utilizing depth-first search traversal to transform the Android UI’s view hierarchy into HTML syntax. We additionally make the most of chain of thought prompting, which includes producing intermediate outcomes and chaining them collectively to reach on the ultimate output, to elicit the reasoning capacity of the LLM.

|

| Animation exhibiting the method of few-shot prompting LLMs with cellular UIs. |

Our immediate design begins with a preamble that explains the immediate’s goal. The preamble is adopted by a number of exemplars consisting of the enter, a sequence of thought (if relevant), and the output for every activity. Every exemplar’s enter is a cellular display within the HTML syntax. Following the enter, chains of thought will be offered to elicit logical reasoning from LLMs. This step shouldn’t be proven within the animation above as it’s elective. The duty output is the specified final result for the goal duties, e.g., a display abstract or a solution to a person query. Few-shot prompting will be achieved with multiple exemplar included within the immediate. Throughout prediction, we feed the mannequin the immediate with a brand new enter display appended on the finish.

Experiments

We carried out complete experiments with 4 pivotal modeling duties: (1) display question-generation, (2) display summarization, (3) display question-answering, and (4) mapping instruction to UI motion. Experimental outcomes present that our method achieves aggressive efficiency utilizing solely two knowledge examples per activity.

|

Activity 1: Display query technology

Given a cellular UI display, the aim of display question-generation is to synthesize coherent, grammatically appropriate pure language questions related to the UI parts requiring person enter.

We discovered that LLMs can leverage the UI context to generate questions for related data. LLMs considerably outperformed the heuristic method (template-based technology) relating to query high quality.

We additionally revealed LLMs’ capacity to mix related enter fields right into a single query for environment friendly communication. For instance, the filters asking for the minimal and most value have been mixed right into a single query: “What’s the value vary?

|

| We noticed that the LLM may use its prior information to mix a number of associated enter fields to ask a single query. |

In an analysis, we solicited human rankings on whether or not the questions have been grammatically appropriate (Grammar) and related to the enter fields for which they have been generated (Relevance). Along with the human-labeled language high quality, we routinely examined how properly LLMs can cowl all the weather that must generate questions (Protection F1). We discovered that the questions generated by LLM had nearly excellent grammar (4.98/5) and have been extremely related to the enter fields displayed on the display (92.8%). Moreover, LLM carried out properly by way of protecting the enter fields comprehensively (95.8%).

| Template | 2-shot LLM | |||||||

| Grammar | 3.6 (out of 5) | 4.98 (out of 5) | ||||||

| Relevance | 84.1% | 92.8% | ||||||

| Protection F1 | 100% | 95.8% |

Activity 2: Display summarization

Display summarization is the automated technology of descriptive language overviews that cowl important functionalities of cellular screens. The duty helps customers rapidly perceive the aim of a cellular UI, which is especially helpful when the UI shouldn’t be visually accessible.

Our outcomes confirmed that LLMs can successfully summarize the important functionalities of a cellular UI. They’ll generate extra correct summaries than the Screen2Words benchmark mannequin that we beforehand launched utilizing UI-specific textual content, as highlighted within the coloured textual content and bins under.

|

| Instance abstract generated by 2-shot LLM. We discovered the LLM is ready to use particular textual content on the display to compose extra correct summaries. |

Curiously, we noticed LLMs utilizing their prior information to infer data not introduced within the UI when creating summaries. Within the instance under, the LLM inferred the subway stations belong to the London Tube system, whereas the enter UI doesn’t include this data.

|

| LLM makes use of its prior information to assist summarize the screens. |

Human analysis rated LLM summaries as extra correct than the benchmark, but they scored decrease on metrics like BLEU. The mismatch between perceived high quality and metric scores echoes current work exhibiting LLMs write higher summaries regardless of computerized metrics not reflecting it.

|

|

| Left: Display summarization efficiency on computerized metrics. Proper: Display summarization accuracy voted by human evaluators. |

Activity 3: Display question-answering

Given a cellular UI and an open-ended query asking for data relating to the UI, the mannequin ought to present the proper reply. We deal with factual questions, which require solutions primarily based on data introduced on the display.

|

| Instance outcomes from the display QA experiment. The LLM considerably outperforms the off-the-shelf QA baseline mannequin. |

We report efficiency utilizing 4 metrics: Actual Matches (similar predicted reply to floor fact), Incorporates GT (reply absolutely containing floor fact), Sub-String of GT (reply is a sub-string of floor fact), and the Micro-F1 rating primarily based on shared phrases between the expected reply and floor fact throughout all the dataset.

Our outcomes confirmed that LLMs can appropriately reply UI-related questions, comparable to “what is the headline?”. The LLM carried out considerably higher than baseline QA mannequin DistillBERT, attaining a 66.7% absolutely appropriate reply charge. Notably, the 0-shot LLM achieved a precise match rating of 30.7%, indicating the mannequin’s intrinsic query answering functionality.

| Fashions | Actual Matches | Incorporates GT | Sub-String of GT | Micro-F1 | ||||||||||

| 0-shot LLM | 30.7% | 6.5% | 5.6% | 31.2% | ||||||||||

| 1-shot LLM | 65.8% | 10.0% | 7.8% | 62.9% | ||||||||||

| 2-shot LLM | 66.7% | 12.6% | 5.2% | 64.8% | ||||||||||

| DistillBERT | 36.0% | 8.5% | 9.9% | 37.2% |

Activity 4: Mapping instruction to UI motion

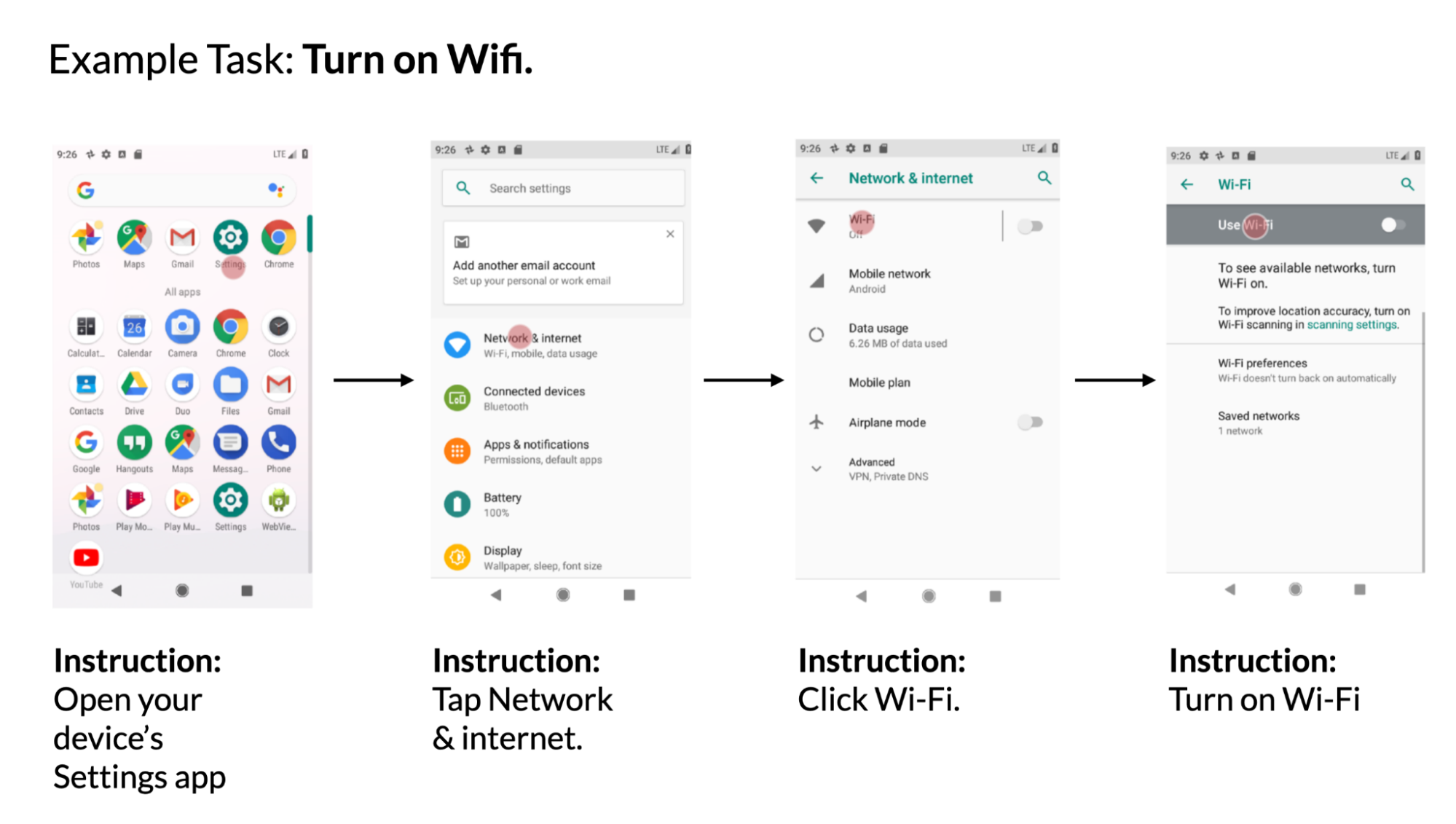

Given a cellular UI display and pure language instruction to regulate the UI, the mannequin must predict the ID of the item to carry out the instructed motion. For instance, when instructed with “Open Gmail,” the mannequin ought to appropriately establish the Gmail icon on the house display. This activity is beneficial for controlling cellular apps utilizing language enter comparable to voice entry. We launched this benchmark activity beforehand.

|

| Instance utilizing knowledge from the PixelHelp dataset. The dataset comprises interplay traces for widespread UI duties comparable to turning on wifi. Every hint comprises a number of steps and corresponding directions. |

We assessed the efficiency of our method utilizing the Partial and Full metrics from the Seq2Act paper. Partial refers back to the share of appropriately predicted particular person steps, whereas Full measures the portion of precisely predicted whole interplay traces. Though our LLM-based technique didn’t surpass the benchmark skilled on huge datasets, it nonetheless achieved outstanding efficiency with simply two prompted knowledge examples.

| Fashions | Partial | Full | ||||||

| 0-shot LLM | 1.29 | 0.00 | ||||||

| 1-shot LLM (cross-app) | 74.69 | 31.67 | ||||||

| 2-shot LLM (cross-app) | 75.28 | 34.44 | ||||||

| 1-shot LLM (in-app) | 78.35 | 40.00 | ||||||

| 2-shot LLM (in-app) | 80.36 | 45.00 | ||||||

| Seq2Act | 89.21 | 70.59 |

Takeaways and conclusion

Our research reveals that prototyping novel language interactions on cellular UIs will be as simple as designing an information exemplar. Consequently, an interplay designer can quickly create functioning mock-ups to check new concepts with finish customers. Furthermore, builders and researchers can discover completely different potentialities of a goal activity earlier than investing vital efforts into growing new datasets and fashions.

We investigated the feasibility of prompting LLMs to allow varied conversational interactions on cellular UIs. We proposed a collection of prompting strategies for adapting LLMs to cellular UIs. We carried out intensive experiments with the 4 necessary modeling duties to judge the effectiveness of our method. The outcomes confirmed that in comparison with conventional machine studying pipelines that consist of pricey knowledge assortment and mannequin coaching, one may quickly notice novel language-based interactions utilizing LLMs whereas attaining aggressive efficiency.

Acknowledgements

We thank our paper co-author Gang Li, and respect the discussions and suggestions from our colleagues Chin-Yi Cheng, Tao Li, Yu Hsiao, Michael Terry and Minsuk Chang. Particular due to Muqthar Mohammad and Ashwin Kakarla for his or her invaluable help in coordinating knowledge assortment. We thank John Guilyard for serving to create animations and graphics within the weblog.

[ad_2]